Subject archive for "machine-learning," page 3

Domino Unlocks the Power of Data Science with Ray 2 Clusters

OpenAI demonstrated the profound impact generative AI could have. Such techniques turn datasets into transformative tools and products. Tangible AI projects that are both inspiring and can save your company time and money. Better yet, you are in a good position to aim high.

By Thomas Dinsmore and Yuval Zukerman5 min read

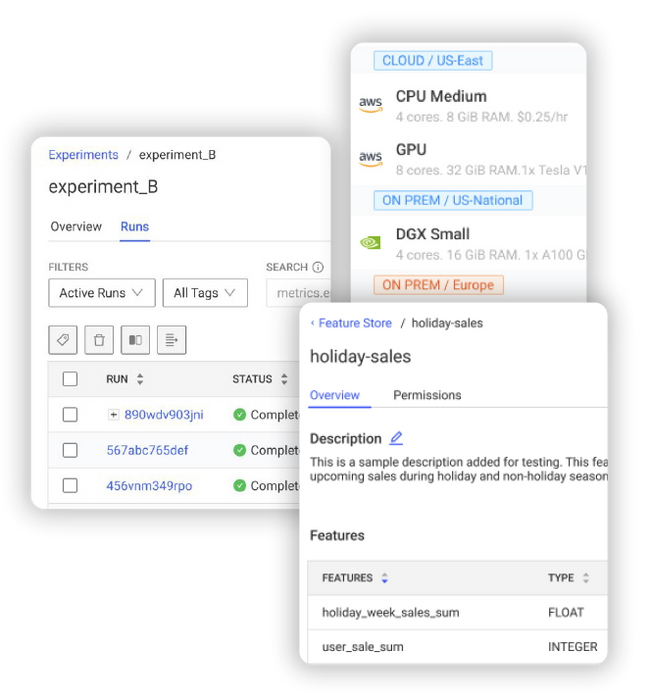

Domino’s Spring 2023 Release Drives Faster Innovation with Cutting-Edge AI for Every Enterprise

In today's economic environment, all organizations need to unlock greater AI value, faster. 98% of CDOs and CDAOs say the companies that bring AI and ML solutions to market fastest will be the ones who survive and thrive in the upcoming times of economic uncertainty. More than ever, organizations need to scale the creation and operationalization of ML models across their businesses and bring to bear the latest, most powerful AI methods and tools.

By Kjell Carlsson10 min read

Feature extraction and image classification using Deep Neural Networks and OpenCV

In a previous blog post we talked about the foundations of Computer vision, the history and capabilities of the OpenCV framework, and how to make your first steps in accessing and visualising images with Python and OpenCV. Here we dive deeper into using OpenCV and DNNs for feature extraction and image classification.

By Dr Behzad Javaheri13 min read

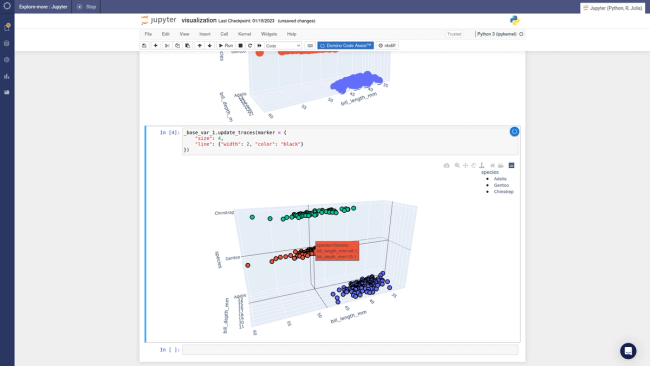

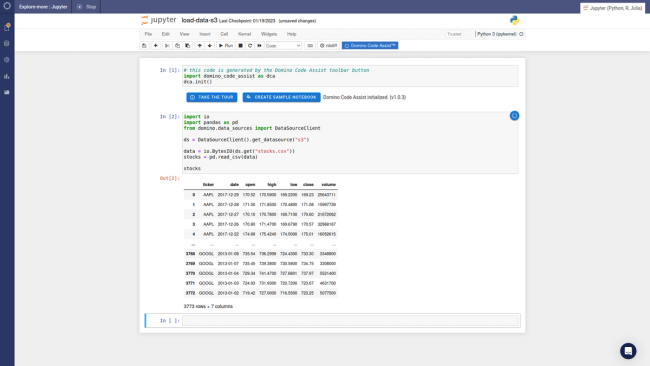

Take a look at Domino Code Assist

A picture is worth 1000 words, so let's get right into exploring Domino Code Assist (DCA). As I mentioned in my prior blog, with DCA you can import a dataset, make a few data visualizations, and deploy those data visualizations as a Python data app - all through a point-and-click interface. At the end of this, you have a perfectly executable Python or R script that follows the steps that you took in the UI.

By Jack Parmer3 min read

Getting to the Good Stuff with Domino Code Assist

My name’s Jack Parmer, and I’m the former CEO and co-founder of Plotly.

By Jack Parmer6 min read

Python is the New Excel

It's becoming clear that the traditional “citizen data scientist” approach, focusing on no-code tools, has become an evolutionary dead end. Organizations who have pursued this route have little to show beyond PoCs and one-off successes despite years of investment in training and underutilized, proprietary tools. The best that can be said is that these efforts have been a costly way of democratizing data prep and business intelligence. In reality, they have been a step in the wrong direction for analytics and data science maturity.

By Kjell Carlsson7 min read

Subscribe to the Domino Newsletter

Receive data science tips and tutorials from leading Data Science leaders, right to your inbox.

By submitting this form you agree to receive communications from Domino related to products and services in accordance with Domino's privacy policy and may opt-out at anytime.