Cut cloud costs by enabling spot instances on Domino

Authors

Vaibhav Dhawan

Principal Solution Architect

Sameer Wadkar

Principal Solution Architect

Article topics

Cost, distributed compute, cloud compute

Intended audience

Administrators, data scientists

Overview and goals

Spot instances are a compute provisioning option offered by AWS that can provide significant discounts compared to standard on-demand pricing, making them an attractive choice for fault-tolerant workloads. They are priced lower because they draw on unused capacity in AWS data centers. When that capacity is needed to fulfill on-demand instance requests, AWS can reclaim spot instances on short notice, potentially interrupting running workloads.

Understanding why spot instances work well

The primary use case for spot instances is cost savings, with discounts of up to 90% over standard on-demand pricing. AWS provides a tool called the Spot Instance Advisor that allows you to evaluate average discounts and interruption frequency across different instance types, helping you assess the risk versus savings tradeoff for your workloads.

Choosing the right workload for spot instances in Domino

Spot instances are best suited for fault-tolerant workloads that can withstand the sudden interruption of an underlying node. The following workload types are good candidates:

1. Distributed compute (Spark and Ray): These frameworks are designed to handle node failures. You should configure the workers to use spot instances, while keeping the head nodes on on-demand instances. If a worker node is reclaimed, the cluster framework simply reschedules the tasks on the remaining nodes without failing the entire job.

2. Interactive workspaces: Workspaces in Domino are backed by persistent volumes (e.g., EBS). If you are price-sensitive and tolerant of occasional interruptions, spot instances can drastically reduce costs. If the instance is reclaimed, the workspace shuts down, but the data in the volume is preserved for the next session.

3. Apps and model endpoints: Hosting apps or models on spot instances is viable if your service level agreement (SLA) allows for brief downtime during the replacement window, the time between an instance being reclaimed and a new one spinning up.

You can configure your hardware tier to prioritize spot instances, but fall back to on-demand.

- At launch: If spot instance capacity is unavailable, Domino immediately provisions an on-demand node to prevent resource starvation.

- During interruption: If a running spot instance is reclaimed and no other spot instance capacity is available, Domino can replace it with an on-demand instance to restore service quickly.

Rule of thumb: Ask yourself, "How tolerant is this workload to sudden instance loss?" If an interruption could result in losing hours of unsaved work, do not use spot instances. If the workload can recover automatically or the cost of interruption is acceptable, use spot instances to save costs.

How to use spot instances with Domino

Your cluster administrator will need to enable spot instance support in your cluster. This feature is available in Domino 6.2 and above, and requires enabling the “ShortLived.EnableCapacityType” feature flag.

Depending on your specific Domino deployment setup, there are a few options:

Using the Kubernetes Cluster Autoscaler:

Step 1: Create a new EKS node group

Create a new EKS node group, selecting your target instance type and setting the Capacity Type to “spot”. Set the label “dominodatalab.com/node-pool” to a new value such as “flex”, and set “dominodatalab.com/capacity-type” to “spot”. You can reuse your Amazon Machine Image (AMI), launch template, or any other configurations from existing node pools.

Step 2: Create a new hardware tier in Domino

- Create a new hardware tier in Domino, setting the label value from above under the “

node pool” input. Enable the “configured with spot instance support” option

Step 3: (Optional) Configure on-demand fallback

You can create an additional node pool identical to step 1 with capacity type set to “on-demand” And the label “dominodatalab.com/capacity-type” to “on-demand”. This allows workload to fall back to on-demand instances when spot capacity is unavailable. Without this step, workloads assigned to this hardware tier will go into a pending state until sport capacity becomes available.

Using AWS Karpenter:

Karpenter offers several benefits over the Cluster Autoscaler when configuring node pools. You can define a single node pool covering a wide range of available instance types, allowing the scheduler to pick the best available node size for your executions. Node pools can have limits on the total CPU and memory across all instance types, and they can be configured to behave exactly like the Cluster Autoscaler when specific instance types are required.

Some example node pool configurations are available in the Git Repo for this blueprint.

Once you have set up your new node pool, create a new hardware tier in Domino and set the value under the “nodepool” input to the value chosen for the “dominodatalab.com/nodepool” label in Karpenter. Enable the “configured with spot instance support” option.

Using Domino Cloud:

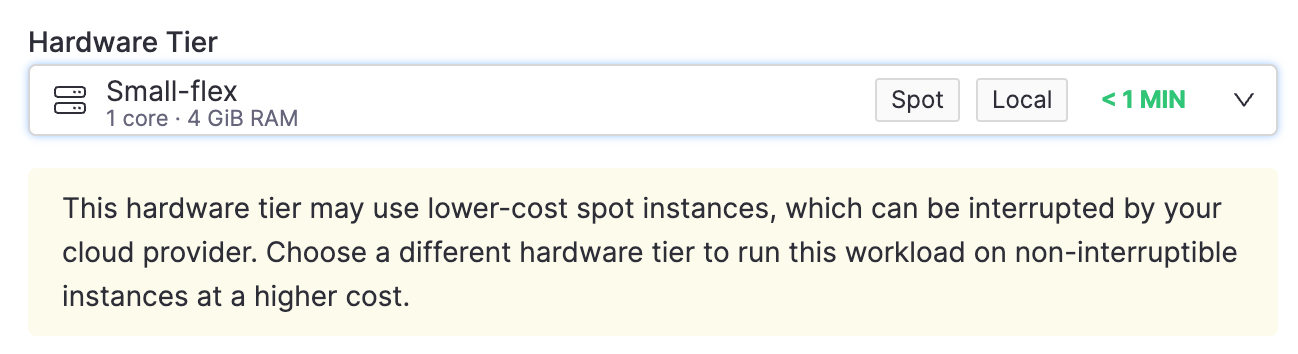

Domino’s documentation provides a guide and best practices for setting up spot instance-enabled node pools and hardware tiers from your cloud admin portal. Once the required tiers are configured, users will see a “Spot” label on the hardware tier name, along with a brief note outlining the risks.

Contact your Domino Professional Services team for help configuring spot node pools, evaluating instance types, or setting up fault-tolerant workloads and pipelines.

Check out the GitHub repo

Vaibhav Dhawan

Principal Solution Architect

I work to support large and complex customer deployments to meet their requirements for security, cost, tool integration, data and processes both in the cloud and on-prem. A number of these solutions and best practices are packaged into reusable Blueprints for our larger customer base, and some are later integrated into the Domino platform.

Sameer Wadkar

Principal solution architect

I work closely with enterprise customers to deeply understand their environments and enable successful adoption of the Domino platform. I've designed and delivered solutions that address real-world challenges, with several becoming part of the core product. My focus is on scalable infrastructure for LLM inference, distributed training, and secure cloud-to-edge deployments, bridging advanced machine learning with operational needs.