Claude Code on Domino: AI-assisted development with Domino skills

Authors

Paul Jojy

Andrea Lowe

Article topics

AI coding assistants, Claude Code, Domino Skills, developer productivity, MLOps automation, agentic development

Intended audience

Data scientists, ML engineers, AI engineers

Overview and goals

The challenge

AI coding assistants are now standard in data science workflows, but most operate without awareness of the platform on which they're deployed. When you use Claude Code, Copilot, or Codex to scaffold a model endpoint or write a training script, the assistant doesn't know how your platform manages environments, routes API calls, tracks experiments, or enforces governance. The result is generic code that requires significant manual rework to align with platform conventions. Your AI coding assistant knows how to write Python. It doesn’t know how Domino works.

The solution

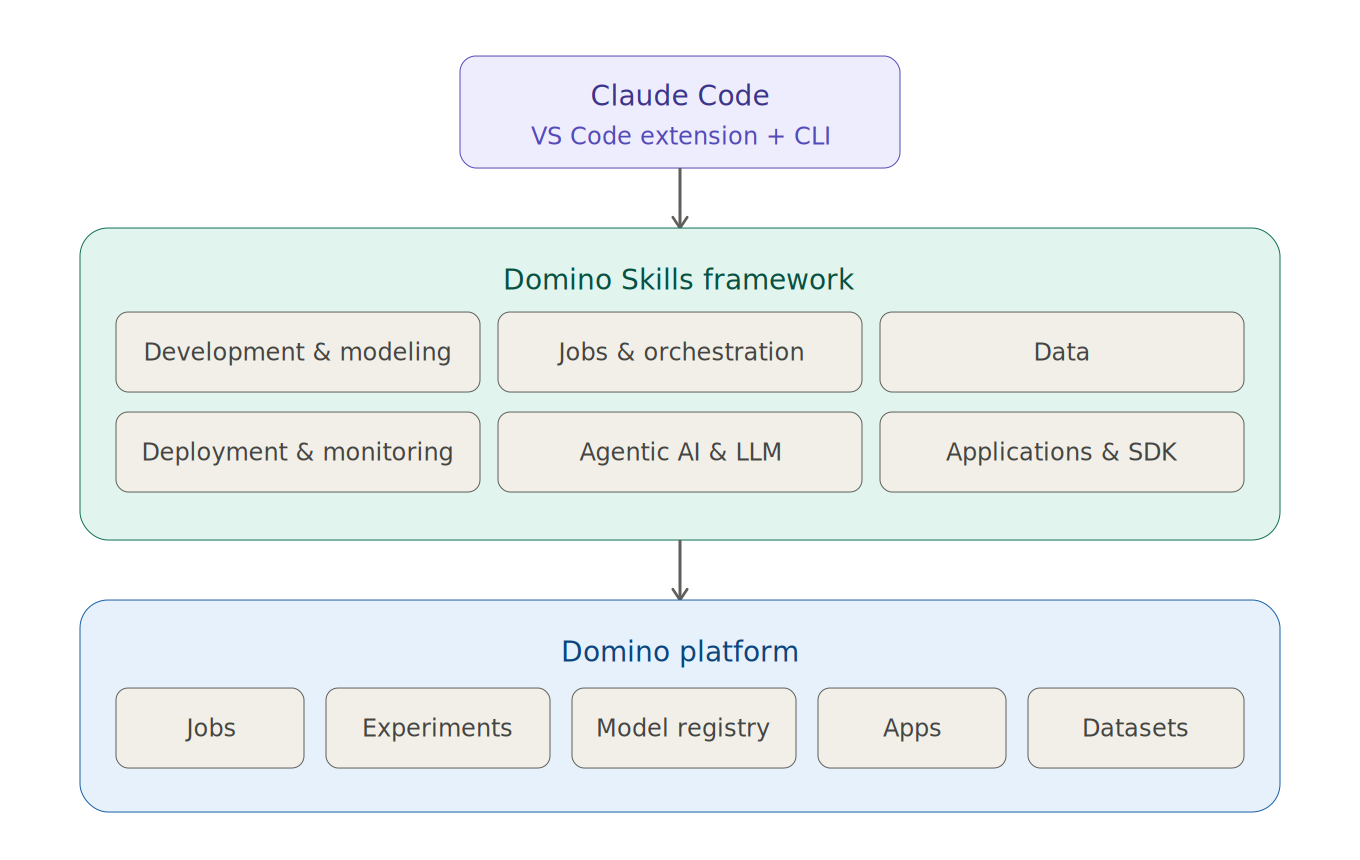

Domino Skills give Claude Code deep, platform-aware context. The framework provides 20+ platform-specific actions spanning workspaces, environments, experiment tracking, model serving, agentic AI tracing, app deployment, and distributed computing.

Skills are currently optimized for Claude Code, which is the focus of this blueprint, but are also compatible with OpenAI Codex.

With Domino Skills installed and configured (or confirmed in your Domino Cloud environment), you can:

- Scaffold and deploy model endpoints, scheduled jobs, and web apps using natural language prompts

- Set up experiment tracking and agentic AI tracing with minimal boilerplate

- Extend the skills framework with custom skills, hooks, and output styles to fit your team's needs

Route inference through Domino AI Gateway or a self-hosted LLM (see the Deploying Self-Hosted LLMs Blueprint)

What are Skills and how does Domino use them?

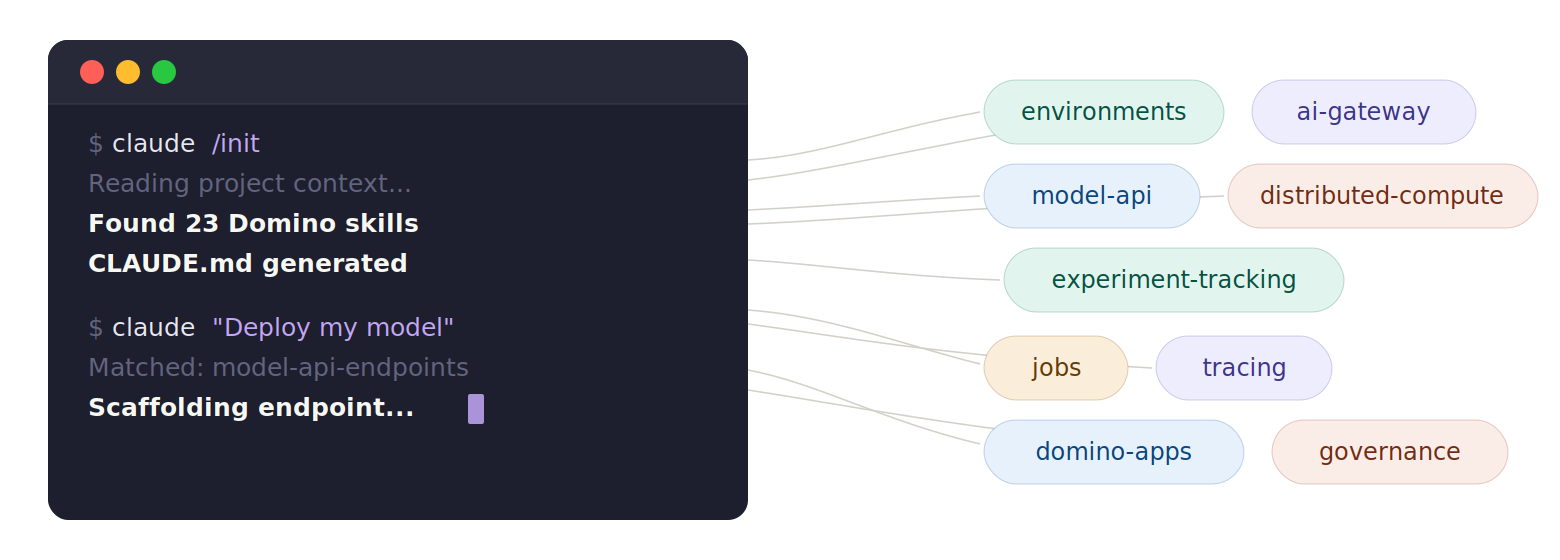

A skill is a structured markdown file that teaches Claude Code how to perform a specific task on the Domino platform. Each skill contains context about relevant APIs, configuration patterns, and best practices. At workspace startup, Claude Code reads the available skills and uses them to understand your project context, generating platform-native code, submitting jobs, configuring environments, and interacting directly with Domino resources. Skills are maintained in the open-source domino-claude-plugin repository alongside agents, slash commands, hooks, and output styles that extend Claude Code's behavior on the platform.

Skills are organized into six categories:

- Development & Modeling: AI-assisted model development, experiment tracking setup, environment and workspace management, project configuration

- Jobs & Orchestration: Job execution, multi-step workflow orchestration with Flows, parameterized launchers, distributed computing with Spark, Ray, and Dask

- Data: Versioned dataset management, external data source connectivity, domino-data SDK, and Feature Store

- Deployment & Monitoring: Model API endpoints, drift detection and alerting, web application deployment

- Agentic AI & LLM: AI Gateway access to external LLM providers, agentic AI tracing and evaluation

- Applications & SDK: App scaffolding (Vite+React, Streamlit, Dash, Flask), Domino Design System styling, the python-domino SDK, app routing, proxy troubleshooting, and proxy debugging

How to set up Claude Code with Domino Skills

Note for Domino Cloud customers: Claude Code and Domino Skills come pre-installed in the Domino Standard Environment. If you are using a cloud environment, skip this section and launch a VS Code workspace, authenticate with your Anthropic account, and start coding. No environment customization or manual plugin installation required. The setup steps below cover manual configuration for self-managed deployments or teams extending the default configuration.

For self-managed deployments, you'll create a Compute Environment that includes Claude Code and the Domino Skills framework. This is a one-time setup: once built, every workspace launched from it will have Claude Code ready with full platform awareness. Most Domino users can create and edit environments, though some organizations restrict this to administrators. In practice, having an admin configure the environment centrally is common, since it ensures a consistent setup across the team without requiring each user to build their own.

Add the following to your environment's Dockerfile Instructions (see Customize your Environment for detailed instructions on editing environments):

# Install Claude Code (native installer, no Node.js required)

USER root

RUN apt-get update && \

apt-get install -y ripgrep && \

rm -rf /var/lib/apt/lists/*

USER ubuntu

RUN curl -fsSL https://claude.ai/install.sh | bash

# Set environment variables

ENV PATH="/home/ubuntu/.claude/bin:/home/ubuntu/.local/bin:$PATH"

# (Optional) Install Domino MCP server dependencies

USER root

RUN pip install --no-cache-dir fastmcp requests python-dotenv

USER ubuntuThe Domino Skills framework also requires a pre-run script that handles Claude Code installation (if not already present in the image), per-user credential isolation, persistence of preferences across workspace restarts, and plugin registration (skills, commands, agents, and output styles).

Copy the script below in the appendix of the Pre Run Script section.

#!/bin/bash

echo "[!] Installing Domino Claude plugin..."

if [ -e /mnt/code/domino-claude-plugin ]; then

rm -rf /mnt/code/domino-claude-plugin

fi

git clone -b consolidate https://github.com/dominodatalab/domino-claude-plugin.git /mnt/code/domino-claude-plugin

echo "[!] Domino Claude plugin installed"

bash /mnt/code/domino-claude-plugin/setup/start_workspace.sh

rm -rf /mnt/code/domino-claude-pluginHow to use Claude Code with Domino Skills

With your environment configured, Claude Code can interact directly with Domino resources via natural-language prompts and slash commands.

Initialize the project

Start every project with /init. This instructs Claude Code to read your codebase, analyze any existing code and plan files, and understand the project's intent. It generates a CLAUDE.md file that serves as a persistent reference, keeping the agent aligned with your project across sessions.

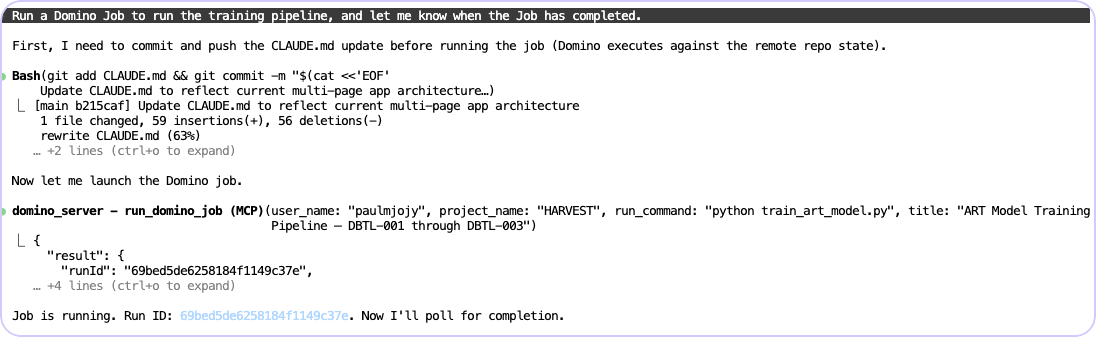

Run Domino Jobs

Configure, submit, and monitor Domino Jobs directly from your workspace. Claude Code handles job configuration and tracks execution through MCP calls, so you don't need to switch to the Domino UI or write boilerplate submission scripts.

Run a Domino Job to run the training pipeline, and let me know when the Job has completed.

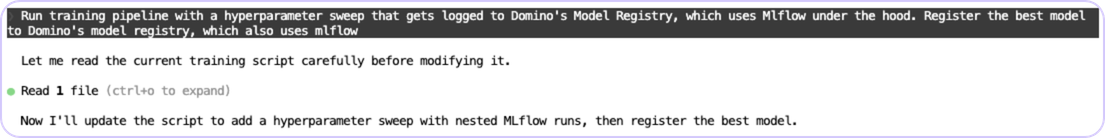

Set up experiment tracking and model registration

Claude Code can scaffold MLflow-based experiment tracking, log parameters and metrics, and register trained models to the Domino Model Registry.

Run training pipeline with a hyperparameter sweep that gets logged to Domino's Model Registry, which uses Mlflow under the hood. Register the best model to Domino's model registry, which also uses mlflow

Implement agentic AI tracing

Set up tracing for agentic AI applications using the Domino GenAI SDK. Claude Code generates the instrumentation code, configures evaluators, and connects the tracing pipeline. For a detailed walkthrough of experiment tracking and evaluation in Domino, see the Agentic AI Tracing Blueprint.

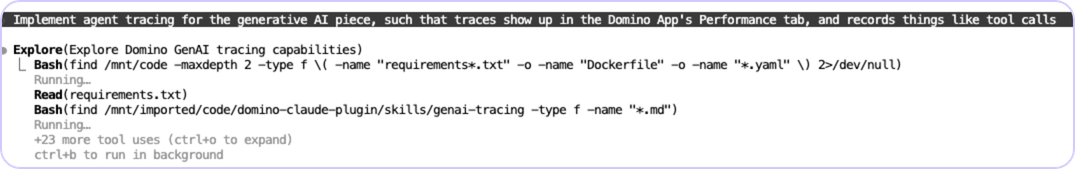

Implement agent tracing for the generative AI piece, such that traces show up in the Domino App's Performance tab, and records things like tool calls

Deploy Domino Apps

Scaffold and deploy web applications (Streamlit, Dash, Flask, or Vite+React) as Domino Apps. Claude Code generates the application code, configures the app publication settings, and manages Domino-specific routing and proxy requirements.

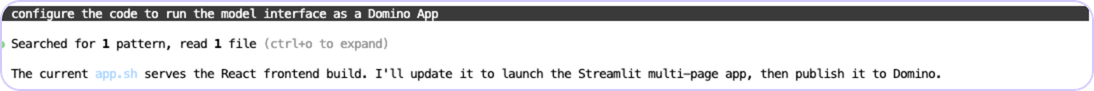

Configure the code to run the model interface as a Domino App, designed like the Domino UI

Extending Domino Skills with custom skills, hooks, and output styles

The domino-claude-plugin is designed to be extended. The repo ships 20+ built-in skills, two output styles, and an example hooks directory, all working templates you can fork and tailor to your team's workflows. The three extension points are independent: you can add any one without touching the others.

Custom Skills

Skills activate automatically when their description matches the task context. When Claude Code receives a request, it reviews available skill descriptions. If a match is found, it loads the full skill instructions and applies them transparently. You never have to invoke a skill explicitly.

Good candidates for custom team skills include:

- Your organization's hardware tier naming conventions and when to use each tier

- Internal data source naming patterns and connection boilerplate

- Your team's MLflow experiment naming and tagging standards

- Internal app deployment checklists and approval processes

Custom skills work best when they are focused and scoped. Best practice is to keep SKILL.md under 500 lines and move detailed reference material to separate files. Only the name and description are loaded initially; the full content is loaded on demand. Skills can also reference each other to compose more complex behaviors. See this guide for detailed instructions on creating a skill.

Custom Hooks

Hooks make automation deterministic. Where a prompt is a request, a hook is a guarantee: every time Claude Code writes a file or runs a command, the hook fires unconditionally.

Define hooks in hooks/hooks.json with an optional top-level description field. When the plugin is enabled, its hooks merge with your user and project hooks. Use ${CLAUDE_PLUGIN_ROOT} to reference scripts bundled with the plugin.

The available hook events and their Domino use cases:

Event

Fires when

Domino use case

PreToolUse

Before any tool runs

Block writes to protected project paths; validate API key is set before Domino SDK calls

PostToolUse

After a tool completes

Auto-commit experiment artifacts; trigger notifications on job completion

SessionStart

When a Claude Code session begins

Load DOMINO_API_KEY and DOMINO_API_HOST from env; inject current project context

UserPromptSubmit

A user submits a prompt

Prepend team-specific context or Domino environment info to every request

Stop

When Claude finishes a task

Post a summary to a Slack channel; log completions to a Domino dataset

Hooks are loaded when Claude Code starts, therefore changes require a session restart. Use the /hooks command to review loaded hooks in the current session. See the Claude Code hook development guide for the full specification.

Custom Output Styles

Output styles modify Claude Code's system prompt to change response formatting without affecting core functionality. Each style is a markdown file with YAML frontmatter defining the name, description, and formatting instructions. Users switch styles with /output-style <name> during a session.

Two built-in styles are available in the output-styles/ directory:

- domino-learning: Educational mode that appends a "Domino Insight" after each task, explaining the underlying platform concept. Well-suited for onboarding new data scientists to the platform.

- domino-mlops: Production-focused mode that appends MLOps checklists and best-practice reminders. Well-suited for teams preparing model deployments for review.

These two styles illustrate the range of what's possible. Consider creating additional styles for your team, such as:

- A verbose mode that includes the equivalent Domino API call or python-domino SDK snippet alongside every action (useful for teams learning the API

- A terse mode with just the code and commands for experienced users who want speed

- A regulated mode that appends data governance reminders such as data classification, PII warnings, and lineage tracking to any response involving datasets or model outputs.

How to use Claude Code with a locally hosted LLM

Routing inference through Domino

By default, Claude Code sends inference requests directly to Anthropic's API. In enterprise environments, teams often need more control over where that traffic goes, whether for auditability, access controls, or to route requests to models hosted on their own infrastructure.

Route through Domino AI Gateway

The Domino AI Gateway is an enterprise proxy layer that centralizes authentication, enforces access controls, and logs all LLM interactions for auditability. To route Claude Code traffic through the gateway rather than directly to Anthropic, set the base URL to your gateway endpoint:

export ANTHROPIC_BASE_URL="https://your-domino-host/ai-gateway/endpoint"Because Claude Code only requires that the endpoint respond in the Anthropic Messages API format, the gateway can route to any compatible backend: Anthropic's API, Google Vertex AI, Amazon Bedrock, or a model hosted on your own infrastructure. This gives your organization a single control plane for all LLM traffic, regardless of provider, without changing how Claude Code behaves.

Configuring a self-hosted model backend

Claude Code is, at its core, a client that speaks the Anthropic Messages API. It does not verify that a Claude model is on the other end. If your inference server responds in the correct format, Claude Code will connect to it, use its tools, and treat whatever model is running as its backend.

Domino's LLM hosting lets you deploy open-source models as production endpoints on your own infrastructure behind an OpenAI-compatible API. To point Claude Code at a Domino-hosted model:

# Point Claude Code to your Domino-hosted LLM

export ANTHROPIC_BASE_URL="https://your-domino-host/model-endpoint"

# Launch with your hosted model

claude --model minimax/M2.5Where ANTHROPIC_BASE_URL is your Domino-hosted LLM endpoint URL and --model specifies which hosted model to use. Claude Code continues to function as the agentic framework while the underlying model runs entirely on your infrastructure.

For a detailed walkthrough of deploying and sizing self-hosted models in Domino, see the Deploying Self-Hosted LLMs Blueprint.

Getting the most out of Claude Code on Domino

Claude Code is most effective when you treat it as a collaborative engineering tool rather than a code generator. Agentic engineering is distinct from vibe coding: AI handles implementation while the human owns the architecture, quality, and correctness. The same Claude Code session that writes a training script can also commit to a project repo, submit a job, and modify environment files, so the quality of your inputs directly affects the quality of outputs. The following best practices apply regardless of which skills, hooks, or output styles your team has configured.

- Start with a plan, not a prompt. Write a design doc or spec before prompting anything. Break work into well-defined tasks and decide on the architecture up front. In Domino, this might be a brief CLAUDE.md that describes the project structure, target hardware tier, expected dataset locations, and definition of done.

- Keep tasks small and testable. Each task should fit within a single context window and have a clear pass/fail condition. Decompose large work ("build a model monitoring pipeline") into scoped sub-tasks. Pair every task with tests: with a solid test suite, an agent can iterate until tests pass. Without tests, it will declare "done" on broken code.

- Manage context deliberately. Use /clear between unrelated tasks and lean on the plugin's skills rather than pasting reference documentation inline. Important information gets lost when the window fills up.

- Use version control as your checkpoint. Commit before handing a task to an agent and review the diff when it finishes. Domino's built-in Git integration makes this natural.

- Reserve judgment-heavy work for human-in-the-loop prompting. Architectural decisions and exploratory analysis benefit from staying interactive rather than handing off to an autonomous loop.

Ralph Loops

Ralph Loops are a pattern for running Claude Code autonomously through an entire task until it is verifiably complete, rather than stopping after each response and waiting for human input. The plugin intercepts session exits via a Stop hook and automatically re-feeds your prompt while preserving all file modifications and git history between iterations.

Run a loop with /ralph-loop "your task" --max-iterations 20 --completion-promise "DONE". Always set --max-iterations as your primary safety mechanism. A typical task rarely needs more than 20 to 30 iterations. When routing through the Domino AI Gateway, be aware that long-running loops consume gateway quota; coordinate with your admin on per-project limits before running overnight loops.

Strong candidates for Ralph Loops in Domino include:

- Migrating experiments between MLflow schemas

- Adding type annotations and docstrings across a module

- Writing test suites for existing training scripts

- Scaffolding Domino Apps from templates

The ralph-wiggum plugin is available in Claude Code's official plugin marketplace. See awesomeclaude.ai/ralph-wiggum for the full specification.

Check out the GitHub repo

Paul Jojy

Sales Engineer, Public Sector

Paul Jojy is a subject matter expert in AI/ML and holds a graduate degree in Artificial Intelligence from Johns Hopkins University. He has led and implemented machine learning initiatives for deployed top secret space programs with total contract values exceeding one billion dollars. Paul brings deep expertise in image processing, signal processing, and generative AI deployment in air-gapped networks, and has received enterprise-level awards for novel work in signal detection.

Andrea Lowe

Product Marketing Director, Data Science/AI/ML

Andrea Lowe, PhD, is Product Marketing Director, Data Science/AI/ML at Domino Data Lab where she develops training on topics including overviews of coding in Python, machine learning, Kubernetes, and AWS. She trained over 1000 data scientists and analysts in the last year. She has previously taught courses including Numerical Methods and Data Analytics & Visualization at the University of South Florida and UC Berkeley Extension.